As a mathematician, I often encounter the confusion between centimeters and millimeters. While both are units of measurement, they have significant differences in their size and application. Understanding the distinction between these two units is essential not just for mathematicians, but also for common people who frequently encounter these units in their daily lives. In this article, we will discuss which is smaller between centimeters and millimeters, how they differ, and how to use them correctly.

Definition and relationship between Centimeters and Millimeters

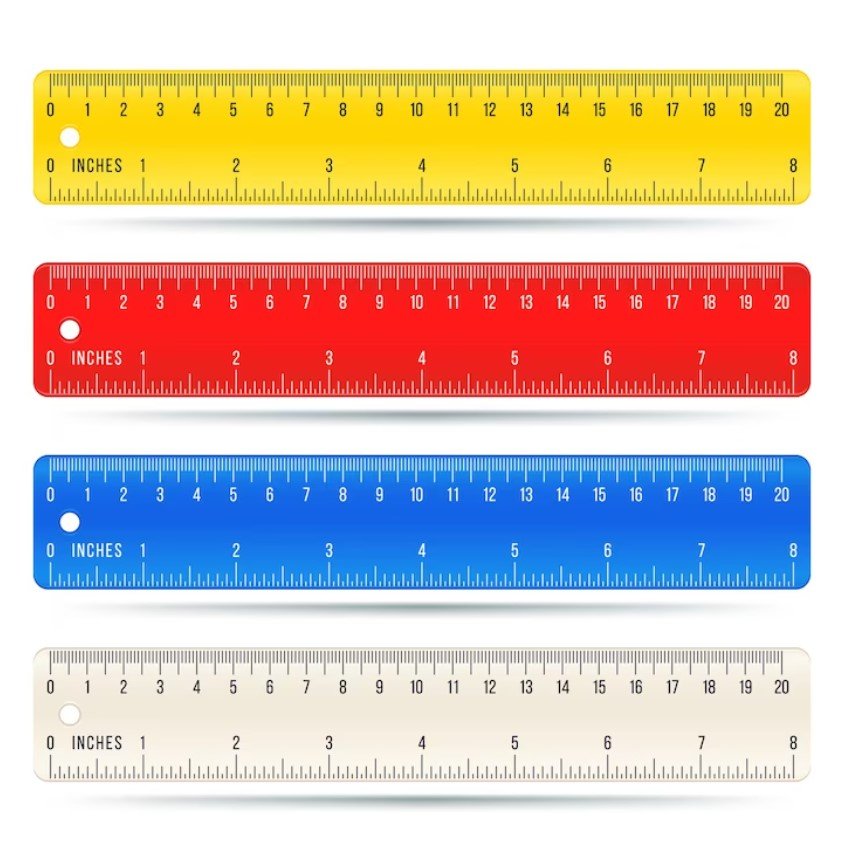

- Centimeters and millimeters are both metric units of length used to measure the distance between objects.

- Centimeters (cm) are larger than millimeters (mm). One centimeter is equivalent to 10 millimeters.

- The relation between these two units is a decimal exponent of ten. Centimeters being larger than millimeters, every centimeter consists of ten millimeters

Understanding the relationship between centimeters and millimeters determines the conversion between the two units. With every centimeter having ten millimeters, it implies that one millimeter is a smaller unit compared to one centimeter. It is crucial to note that a centimeter is still a relatively small unit of measurement compared to others like meters and kilometers.

In most cases, we use millimeters for smaller objects like jewelry, screws, nails, etc., while for relatively larger objects like doors, windows, and bookshelves, we use centimeters as the ideal unit of measurement.

The correct way to use centimeters and millimeters

- While both centimeters and millimeters can be used to measure the same object, using the correct unit measurement is essential. It helps avoid confusion and gives accurate results.

- When measurements are in millimeters, it is essential to match them with objects with corresponding size labels.

For example, when measuring a piece of jewelry that is three centimeters long, using millimeters would give a result of 30 millimeters. This interpretation of millimeters would correspond to a size label ‘small.’ Though this measurement could be accurate for the object in question, it would be best practice to use centimeters as the unit of measurement.

Although millimeters are particularly useful for small objects that require high precision, using centimeters as the primary unit of measurement is best applicable for everyday measurements and measurements that require average precision.

How to use Centimeters in everyday situations

- Centimeters are commonly used in measuring larger objects that require an adequate level of precision.

- Using centimeters in everyday situations, such as measuring large furniture, height, or weight, gives accurate results.

Let’s say you plan to buy a new TV stand. The best way to measure the size of your TV and determine which table size fits is using centimeters. By measuring the width, length, and height of your TV and using centimeters as the unit of measurement, it provides more accurate results and helps you choose a suitable TV stand based on the measurements.

Additionally, using centimeters as the primary unit of measurement in cooking is crucial. Imagine a recipe that requires two cups of flour. Incorrectly measuring this quantity could entirely spoil the recipe. Using centimeters and ensuring you have the right flour amount gives accurate results and the desired texture to your dish.

Advantages and Disadvantages of Using Millimeters and Centimeters

- Millimeters are best suited to very small items that require high precision, while centimeters are more suited to objects that have an average or large size.

- With their smaller size, measuring instruments labeled in millimeters can be more precise.

- However, since centimeters are nearly ten times larger than millimeters, they are easier to read on measuring instruments and far easier to compare with objects.

With centimeters, it is easier to estimate the size of something. Suppose an object is three centimeters in length; this measurement is generally easier to interpret and judge if the size of that object is suitable for what you need than if it was measured with millimeters.

In contrast, if you needed to measure very small items like the distance between pixels on your computer screen, you would need to use millimeters or even micrometers to get the necessary precision levels.

Common misconceptions about Centimeters and Millimeters

- Many people are rarely aware of the difference between centimeters and millimeters, leading to misconceptions about the two units.

- A common misconception is that cm and mm are interchangeable.

It is crucial to understand that unlike kilometers and meters, cm and mm are not interchangeable. One centimeter is equivalent to ten millimeters. Hence, a measurement of 2 cm is approximately 20 mm.

It is also common for people to assume that cm and mm are separate systems, causing measurement inaccuracies. It is essential to use the same system throughout a particular measurement for an accurate and precise result.

Other Metric System Units to Know

The metric system is vast and has a multitude of different units of measurement. Here are a few other units, along with a brief description of their size: – meters: the basic unit of length in the metric system

- kilometers: 1000 meters

- grams: the basic unit of mass in the metric system

- kilograms: 1000 grams

- liters: the basic unit of volume in the metric system

- milliliters: 1/1000th of a liter

Conclusion

Understanding the difference between centimeters and millimeters is essential, especially in our everyday lives. While both units serve similar purposes, the choice between centimeters and millimeters depends on the size and object to measure. From this article, we have established that while centimeters are better for everyday items like large furniture and cooking, millimeters are ideal for items that require high precision like small jewelry. By using the correct unit of measurement and understanding the relationship between the two, you avoid confusion, save time, and get the most accurate results.